All rights reserved, Habeas 2026

See our Privacy Policy

See our Privacy Policy

In early 2025, an Australian lawyer appearing in the Federal Circuit and Family Court filed submissions containing case citations that did not exist. When the opposing party's lawyers pointed this out, the practitioner, who had used ChatGPT to identify cases, tried to fix the problem by sending an amended submission directly to the judge's associate, without telling the other side. That compounded the original error with a conduct breach. The matter was referred to the NSW Legal Services Commissioner.

Valu v Minister for Immigration and Multicultural Affairs (No 2) [2025] FedCFamC2G 95 ('Valu')was not an isolated incident. In the same year, the Full Federal Court dealt with a self-represented litigant who cited a false case in support of a recusal application (Luck v Secretary, Services Australia [2025] FCAFC 26). In Queensland, the Civil and Administrative Tribunal recorded a practitioner citing a case that did not exist (LJY v Occupational Therapy Board of Australia [2025] QCAT 96). In Victoria, Rishi Nathwani KC filed submissions in a murder case containing fabricated case citations and fake quotes from a parliamentary speech, and publicly apologised to the court.

Four documented incidents in a single year, across four different Australian jurisdictions. In most of the Australian cases, the conduct reflected poor verification practice rather than deliberate deception. But the pattern is consistent: practitioners working without an adequate system for checking what AI produced before it went on the record, and courts responding by referring the matter to regulators.

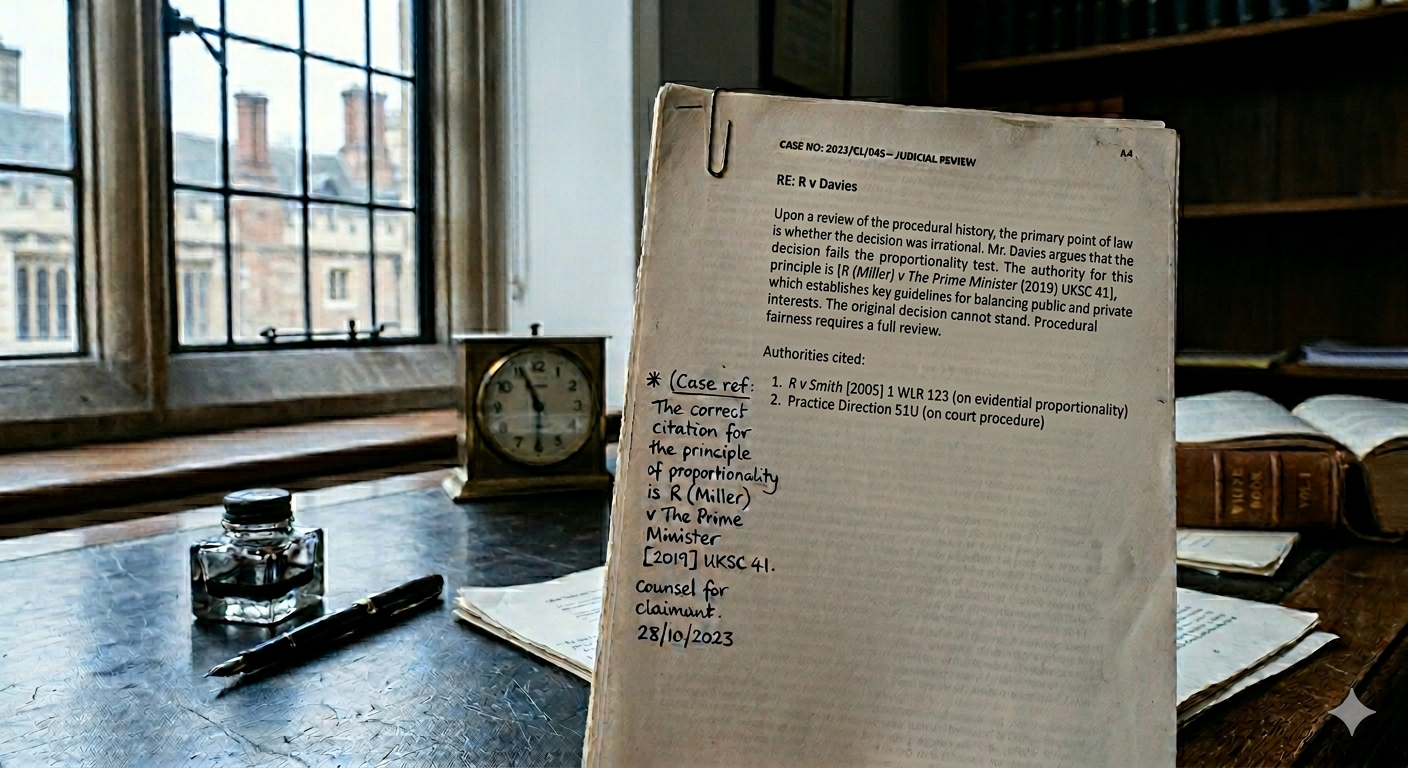

The Federal Court's General Practice Note GPN-AI, issued in October 2023, establishes that practitioners are expected to disclose AI use if required to do so by a judge or registrar, and that parties and practitioners ordinarily should disclose to each other the assistance provided by AI programs. Disclosure is not framed as an automatic obligation in all circumstances, but as a baseline expectation that courts can and do enforce. More absolute is the verification requirement: practitioners must confirm that AI-generated content has been checked for accuracy before it is filed.

The practice note treats AI as a tool whose output remains the practitioner's responsibility. A solicitor who files an AI-generated document without reading it carries the same professional exposure as one who files a document they personally wrote but did not check. Multiple state courts issued equivalent guidance during 2024 and 2025, progressively closing off the argument that a practitioner had no notice of what was expected.

Most law firms built their AI policies to manage internal risk: including decisions like which tools staff can access and who needs approval for new software. Those are legitimate concerns, but they they do not precisely map onto what courts are asking for.

What court disclosure frameworks require, and what most internal policies omit, is a named verification step before any AI-drafted content is filed, a working definition of what counts as AI-assisted work for disclosure purposes, and documentation of who reviewed what and when. The practice notes do not define 'AI-assisted work' precisely, which creates a gap that individual practitioners fill however they see fit. The Valu decision is instructive: the error was not only that the lawyer used AI carelessly, but that no verification step existed to catch it. The safer position, absent clearer guidance, is to treat any substantive AI contribution to the content of a filed document as disclosable.

The pattern of regulatory referrals in 2025 and 2026 confirms that Australian courts are prepared to treat AI misuse as a conduct matter. In Oberoi v Douglas [2026] VSCA 31, the Court of Appeal referred a solicitor to the Legal Services Commissioner, stating that reliance on authorities which do not exist 'represents a falling short of the standards of diligence that a member of the public is entitled to expect of a reasonably competent lawyer.' In Re Walker [2025] VSC 714, Moore J expressed serious concerns about a solicitor's conduct in relation to AI use and required the implementation of a formal verification protocol before further AI-assisted work could be filed.

The legal services regulatory framework already supports this approach: the obligation to act in a client's best interests includes filing accurate documents, and the duty of candour to the court applies regardless of whether an error originated in AI or carelessness. The rules of professional conduct prohibit conduct likely to diminish public confidence in the administration of justice, a category that fabricated case citations comfortably satisfy.

A compliant framework starts with a working definition of what counts as AI-assisted work for disclosure purposes. From there it needs a named verification and sign-off step before anything AI-drafted reaches a court, and documentation of that review: which tool was used, who checked the output, when. Re Walker provides a useful template: the solicitor was required to check every case citation against an authoritative database prior to filing, identify any unreported case expressly, and record AI use internally with key outputs independently verified before filing. Some firms are building this into matter management systems as a checkpoint. That requires no significant technology investment.

The policy also needs a review process given how quickly new court guidance is being issued. A document drafted in late 2023 does not reflect the state of court requirements as they stood by mid-2025.

The documented cases provide a reasonably clear map of what failure looks like, and the pattern across all of them is consistent: practitioners working without verification built into their workflow. A policy that addresses the disclosure obligation, the verification step, and the documentation is a different document from the one most firms currently have, and it is not a particularly high bar to clear.